Main Menu

Welcome

These past few years, scholarly publishing has seen some remarkably self-destructive moves by corporate publishers. Spear-heading the self-destructive campaign is, of course, Elsevier (aka. Evilsevier). Barely out of the arms-trade business, the news broke that they designed several advertisements for Merck products to look like peer-reviewed journals. These fake journals were then distributed to doctors, without any information that these 'journals' were, in fact, advertisements. Around the same time, Elsevier, Wiley and the American Chemical Society approached Eric Dezenhall (of Enron and Exxon acclaim) in order to discredit the open access movement. Little later, Elsevier sponsored two law-makers in the US to propose a bill that would make open access mandates by reearch funders like the NIH illegal, ultimately sparking a world-wide counter movement to boycott Elsevier, in what was called the "Academic Spring".

Not to be outdone by the 10k pound gorilla of scholarly publishing, humanities monograph publisher Edwin Mellen just sued a librarian critical of their monographs for a total of US$4.5 million in damages. Apparently, corporate publishers are nervous and in fear of losing their tax-payer funded cash-cows which have allowed them to sport record profits as if there never was any international financial crisis. Astoundingly, their 'defense' is to treat their customers as their enemies and lashing out against them on every possible occasion. Moreover, if Elsevier's behavior above is anything to go by, we, their customers (i.e., scientists and librarians) are not only their enemies, we're also stupid and gullible, since the corporate publishers apparently do not expect any serious retribution for their actions. How else would one explain such blatantly self-destructive behavior?

Corporate publishers behave more and more like the music industry and will likely go the same way into irrelevance, eventually. Since they seem to be so hell-bent on their own destruction, let's help them along the way. Here's what you can do:

- Sign the petition to support librarian Dale Askey against publisher Edwin Mellen.

- Ask your library to drop subscriptions to journals from corporate publishers like Elsevier and to refrain from buying books from Edwin Mellen. Instead, improve the efficiency of inter-library loan and discuss with your library how they can become open access publishers themselves, just like my library here.

Help putting corporate publishers out of their misery and talk to your library about alternatives.

Posted on Monday 11 February 2013 - 10:27:44 comment: 2

{TAGS}

{TAGS}

Evidence is the basis of all science. Evidence should also be the basis of all policies. Concerning scholarly communication, one central piece of evidence is the cost of knowledge, i.e., how much are we spending on our communication system and the associated question of whether we get what we pay for. One point that has always irked me was that I couldn't find any reliable figures as to how much we (i.e., our university libraries) are actually spending on the journals we use. The data from the American Research Libraries is behind a paywall and I'm not even sure this data covers all the university libraries in the US.

The data for the German libraries are not behind a paywall, however. At the German Library Statistics, you can get all the info you want. You can even query their database, very cool! You can check every one of the 250 university libraries and how much they spent on what in which year. Here are a few things I found out:

German libraries spent in 2011

So far for some solid numbers and statistics. What keeps irritating me is that it appears to be tricky to get that sort of data from countries around the globe, or some global estimate. The estimates for scholarly publishing sales I have seen vary from les than 10b annually to 20b. Assuming the German data were representative of university libraries world-wide (maybe rounded down to $500k per library annually to account for the fact that Germany might be spending more on education than other countries), one can estimate that 9000 universities world-wide together spend about US$4.5b on subscriptions every year (again, using averages, not medians). Again given the conservative estimate of a 30% publishers' profit margin (and all costs remaining constant), a library-based communication system that were to replace scholarly journals would stand to save about $1.5b every year, globally. Clearly, these extrapolations are somewhat dodgy, but they should at least be in the right ballpark. They're a little less than half of what I used to reference, which might be explained by my previous sources probably including book sales.

What one could also see was that an average German library in 2011 subscribed to 2k print journals and 15k e-Journals, at an average cost of 34€ per title. Obviously, with the largest libraries subscribing to more than 60k e-titles, this must mean that this data includes more than just peer-reviewed scholarly journals. Either way, I can use these numbers to check the debate mentioned here before, whether or not per title costs have actually decreased in recent years. For German libraries this is easy to check: I can get the number of periodicals as well as the money spent on them. In 2008, the average subscription price was 48€ per title, so at least these two data points seem to corroborate the publishers' statement that per title costs are decreasing. Obviously, trends and absolute prices will vary from location to location or from field to field. For instance, in 2011, average cost for a printed periodical in the sciences was 540€ and the KIT library annually publishes their most expensive journals, with the most expensive one netting a whopping 20k € per year. Anyway, that's the big picture for German university libraries and for those so inclined, more refined statistics can be generated from the data there.

Obviously, these are not the numbers I would ideally like to rely on, but at least they appear to be more accurate than what I've used before.

The data for the German libraries are not behind a paywall, however. At the German Library Statistics, you can get all the info you want. You can even query their database, very cool! You can check every one of the 250 university libraries and how much they spent on what in which year. Here are a few things I found out:

German libraries spent in 2011

- 170 million € on books

- 130 million € on subscriptions

So far for some solid numbers and statistics. What keeps irritating me is that it appears to be tricky to get that sort of data from countries around the globe, or some global estimate. The estimates for scholarly publishing sales I have seen vary from les than 10b annually to 20b. Assuming the German data were representative of university libraries world-wide (maybe rounded down to $500k per library annually to account for the fact that Germany might be spending more on education than other countries), one can estimate that 9000 universities world-wide together spend about US$4.5b on subscriptions every year (again, using averages, not medians). Again given the conservative estimate of a 30% publishers' profit margin (and all costs remaining constant), a library-based communication system that were to replace scholarly journals would stand to save about $1.5b every year, globally. Clearly, these extrapolations are somewhat dodgy, but they should at least be in the right ballpark. They're a little less than half of what I used to reference, which might be explained by my previous sources probably including book sales.

What one could also see was that an average German library in 2011 subscribed to 2k print journals and 15k e-Journals, at an average cost of 34€ per title. Obviously, with the largest libraries subscribing to more than 60k e-titles, this must mean that this data includes more than just peer-reviewed scholarly journals. Either way, I can use these numbers to check the debate mentioned here before, whether or not per title costs have actually decreased in recent years. For German libraries this is easy to check: I can get the number of periodicals as well as the money spent on them. In 2008, the average subscription price was 48€ per title, so at least these two data points seem to corroborate the publishers' statement that per title costs are decreasing. Obviously, trends and absolute prices will vary from location to location or from field to field. For instance, in 2011, average cost for a printed periodical in the sciences was 540€ and the KIT library annually publishes their most expensive journals, with the most expensive one netting a whopping 20k € per year. Anyway, that's the big picture for German university libraries and for those so inclined, more refined statistics can be generated from the data there.

Obviously, these are not the numbers I would ideally like to rely on, but at least they appear to be more accurate than what I've used before.

Posted on Thursday 07 February 2013 - 19:07:36 comment: 0

{TAGS}

{TAGS}

Now also cross-posted at homolog.us (and slightly edited here to remove any potentially misleading, unintentional implications).

There is a lively discussion going on right now in various forums on the incentives for scientists to publish their work in this venue or another. Some of these discussions cite our manuscript on the pernicious consequences of journal rank, others don't. In our manuscript, we speculate that the scientific community may be facing a deluge of fraud and misconduct, because of the incentives to publish in high-ranking journals, a central point of contention in the discussions linked to above.

However, one need not go to the extreme and (still) very rare cases of misconduct. The pernicious incentives in our reputation system can also lead to much more subtle behaviors that appear rather inocuous at first. For instance, in order to market our research to the journal Science, we invented the term "operant reward learning". This term does not exist, but we felt that the term 'reward' was a buzz-word that increased our chances - and it did get published. If everyone did that, it's easy to imagine what a mess (even worse than it already is) the scientific nomenclature would be. Another example of just how subtle these incentives may skew the scientific debate happened to land on my desk this morning in the form of a paper quite close to my own field of research, published in the (for our field) very highly ranked journal "Current Biology".

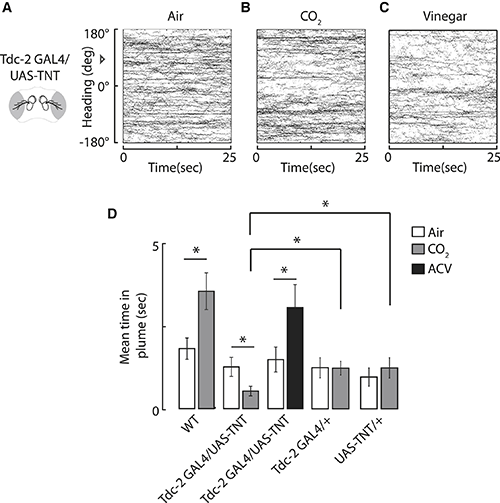

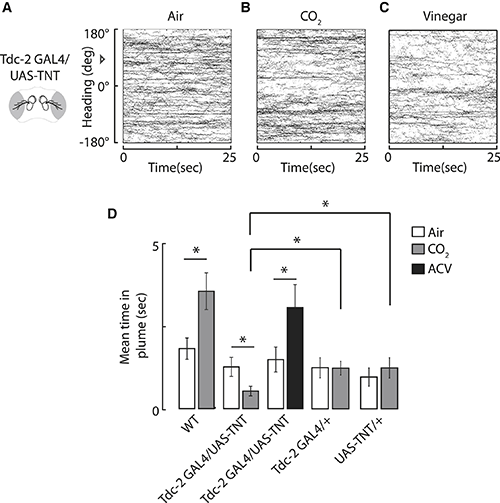

This paper caught my attention not only because it concerned fly behavior or featured a colleague I happen to know quite well, but because it stated in the abstract that: "blocking synaptic output from octopamine neurons inverts the valence assigned to CO2 and elicits an aversive response in flight". We currently have a few projects in our lab that target these octopamine neurons, so this was a potentially very important finding. It was my postdoc, Julien Colomb, who spotted the problem with this statement first. In fact, if it wasn't for Julien, I might have never looked at the data myself, as I know the technique and I know and trust the lab the paper was from. I probably would just have laid the contents of the abstract to my memory and cited the paper where appropriate, as the results confirmed our data and those in the literature (a clear case of confirmation bias on my part). But have a look at Fig. 3 (click for larger image):

The important data to look at is in Fig. 3D. It shows an attraction for CO2 vs. air in wildtype flies (WT), but an aversion in the genetically manipulated flies (Tdc2-GAL4/UAS-TNT). This is what is stated in the abstract: wildtype flies are attracted to CO2 and flies where octopamine release is blocked, avoid CO2. However, the important control experiments, are those that test for off-target effects of the genetic manipulation. In other words, do the transgenes inserted into the fly genome have an effect of their own, independent of their combined effect on octopamine? In this case, there are two transgenes, a GAL4 transgene (the driver) and a UAS transgene (the effector). Their CO2 scores are shown at the end (Tdc2-GAL4/+ and UAS-TNT/+, respectively). Interestingly, these lines both show a strongly reduced preference for CO2. Their preference is so strongly reduced that it is not even different from that for air. To put it differently: neither of both control lines show normal, wild type behavior. They may not be able to detect CO2 any more, or have secondary alterations that simply reduce the preference for CO2, or a myriad of other explanations. Importantly, nobody can know if these two effects, which either alone already reduce the preference for CO2 dramatically, together could lead to an avoidance of CO2 that is completely independent of the targeted octopamine neurons.

In this respect it is important to point out that the authors are not trying to hide this effect. In the text, in what appears to be a contradiction to the abstract, the authors write:

Given what is known about the action of octopamine in these processes, the hypotheses that the authors claim to have corroborated is beautiful, makes sense and is biologically plausible. So the result they present in the abstract "blocking synaptic output from octopamine neurons inverts the valence assigned to CO2" makes this a very sexy paper for the field that unites several disparate findings and puts a whole set of results in a broader perspective (and may well be correct!). Of course, these considerations are crucial for marketing your paper to one of the top journals in the field. Had the authors discarded the octopamine results from their paper, one may speculate that it would be rather unlikely it would have been published in Current Biology. It is more difficult to estimate what might have hapened if the authors had been more conservative in their approach and rephrased the statement in the abstract to something that would indicate that they had suggestive, but not conclusive evidence for the involvement of octopamine neurons in CO2 preference. A reasonable speculation would be that reviewers might have asked for additonal experiments until such a conclusion could be reached.

To make this unambiguously clear: I can't find any misconduct whatsoever in this paper, only clever marketing of the sort that occurs in almost every 'top-journal' paper these days and is definitely common practice. On the contrary, this is exactly the behavior incentivized by the current system, it's what the system demands, so this is what we get. It's precisely this kind of marketing we refer to in our manuscript, that is selected for in the current evolution of the scientific community. If you don't do it, you'll end up unemployed. It's what we do to stay alive.

In this respect it is worth speculating about the particular incentives the authors of this study might have experienced. The first author is a postdoc in Mark Frye's lab, so she needs to publish in top journals to get a job. The second author was an undergraduate, so likely less involved in the drafting and revising of the paper and the last author is a junior investigator for HHMI, so likely under enormous pressure (or at least perceived pressure) to publish in top journals not only to justify his award, but also to do well in future evaluations. Note that these are pure speculations: while I know Mark Frye personally, I did not contact him or any of the authors for a comment, as I felt the paper should be appraised on its own.

Obviously, this is just a case study, N=1, an anecdote, but I think it exemplifies the incentives and how they can distort the scientific debate. For instance, see the Tweet I sent around after I read the abstract (but before I had a look at the actual data):

UPDATE: Due to the popularity of this post, I'd like to spell out what I alluded to above: there would have been nothing wrong with the paper, had the abstract mentioned that the connection with octopamine was suggestive, but not conclusive.

Wasserman, S., Salomon, A., & Frye, M. (2013). Drosophila Tracks Carbon Dioxide in Flight Current Biology DOI: 10.1016/j.cub.2012.12.038

There is a lively discussion going on right now in various forums on the incentives for scientists to publish their work in this venue or another. Some of these discussions cite our manuscript on the pernicious consequences of journal rank, others don't. In our manuscript, we speculate that the scientific community may be facing a deluge of fraud and misconduct, because of the incentives to publish in high-ranking journals, a central point of contention in the discussions linked to above.

However, one need not go to the extreme and (still) very rare cases of misconduct. The pernicious incentives in our reputation system can also lead to much more subtle behaviors that appear rather inocuous at first. For instance, in order to market our research to the journal Science, we invented the term "operant reward learning". This term does not exist, but we felt that the term 'reward' was a buzz-word that increased our chances - and it did get published. If everyone did that, it's easy to imagine what a mess (even worse than it already is) the scientific nomenclature would be. Another example of just how subtle these incentives may skew the scientific debate happened to land on my desk this morning in the form of a paper quite close to my own field of research, published in the (for our field) very highly ranked journal "Current Biology".

This paper caught my attention not only because it concerned fly behavior or featured a colleague I happen to know quite well, but because it stated in the abstract that: "blocking synaptic output from octopamine neurons inverts the valence assigned to CO2 and elicits an aversive response in flight". We currently have a few projects in our lab that target these octopamine neurons, so this was a potentially very important finding. It was my postdoc, Julien Colomb, who spotted the problem with this statement first. In fact, if it wasn't for Julien, I might have never looked at the data myself, as I know the technique and I know and trust the lab the paper was from. I probably would just have laid the contents of the abstract to my memory and cited the paper where appropriate, as the results confirmed our data and those in the literature (a clear case of confirmation bias on my part). But have a look at Fig. 3 (click for larger image):

The important data to look at is in Fig. 3D. It shows an attraction for CO2 vs. air in wildtype flies (WT), but an aversion in the genetically manipulated flies (Tdc2-GAL4/UAS-TNT). This is what is stated in the abstract: wildtype flies are attracted to CO2 and flies where octopamine release is blocked, avoid CO2. However, the important control experiments, are those that test for off-target effects of the genetic manipulation. In other words, do the transgenes inserted into the fly genome have an effect of their own, independent of their combined effect on octopamine? In this case, there are two transgenes, a GAL4 transgene (the driver) and a UAS transgene (the effector). Their CO2 scores are shown at the end (Tdc2-GAL4/+ and UAS-TNT/+, respectively). Interestingly, these lines both show a strongly reduced preference for CO2. Their preference is so strongly reduced that it is not even different from that for air. To put it differently: neither of both control lines show normal, wild type behavior. They may not be able to detect CO2 any more, or have secondary alterations that simply reduce the preference for CO2, or a myriad of other explanations. Importantly, nobody can know if these two effects, which either alone already reduce the preference for CO2 dramatically, together could lead to an avoidance of CO2 that is completely independent of the targeted octopamine neurons.

In this respect it is important to point out that the authors are not trying to hide this effect. In the text, in what appears to be a contradiction to the abstract, the authors write:

We note that the Tdc2-GAL4/+ driver line does not spend a significantly greater amount of time in the CO2 plume by comparison to air, but this line, as well as the UAS-TNT/+ parent line, spends significantly more time in the CO2 plume in comparison to their progeny. Therefore, this experimental result cannot be fully attributable to the genetic background.

The last sentence, of course, is incorrect: if both lines independently reduce the attractiveness of CO2, then it is very conceivable, one might even say straightforward, that both together might reduce it so much, that the resulting value of CO2 is negative, leading to an aversive response in the flies, irrespective of the involvement of octopamine.Given what is known about the action of octopamine in these processes, the hypotheses that the authors claim to have corroborated is beautiful, makes sense and is biologically plausible. So the result they present in the abstract "blocking synaptic output from octopamine neurons inverts the valence assigned to CO2" makes this a very sexy paper for the field that unites several disparate findings and puts a whole set of results in a broader perspective (and may well be correct!). Of course, these considerations are crucial for marketing your paper to one of the top journals in the field. Had the authors discarded the octopamine results from their paper, one may speculate that it would be rather unlikely it would have been published in Current Biology. It is more difficult to estimate what might have hapened if the authors had been more conservative in their approach and rephrased the statement in the abstract to something that would indicate that they had suggestive, but not conclusive evidence for the involvement of octopamine neurons in CO2 preference. A reasonable speculation would be that reviewers might have asked for additonal experiments until such a conclusion could be reached.

To make this unambiguously clear: I can't find any misconduct whatsoever in this paper, only clever marketing of the sort that occurs in almost every 'top-journal' paper these days and is definitely common practice. On the contrary, this is exactly the behavior incentivized by the current system, it's what the system demands, so this is what we get. It's precisely this kind of marketing we refer to in our manuscript, that is selected for in the current evolution of the scientific community. If you don't do it, you'll end up unemployed. It's what we do to stay alive.

In this respect it is worth speculating about the particular incentives the authors of this study might have experienced. The first author is a postdoc in Mark Frye's lab, so she needs to publish in top journals to get a job. The second author was an undergraduate, so likely less involved in the drafting and revising of the paper and the last author is a junior investigator for HHMI, so likely under enormous pressure (or at least perceived pressure) to publish in top journals not only to justify his award, but also to do well in future evaluations. Note that these are pure speculations: while I know Mark Frye personally, I did not contact him or any of the authors for a comment, as I felt the paper should be appraised on its own.

Obviously, this is just a case study, N=1, an anecdote, but I think it exemplifies the incentives and how they can distort the scientific debate. For instance, see the Tweet I sent around after I read the abstract (but before I had a look at the actual data):

Octopamine reverses Carbon Dioxide preference in flies feedly.com/k/Upirw7— Björn Brembs (@brembs) January 25, 2013

UPDATE: Due to the popularity of this post, I'd like to spell out what I alluded to above: there would have been nothing wrong with the paper, had the abstract mentioned that the connection with octopamine was suggestive, but not conclusive.

Wasserman, S., Salomon, A., & Frye, M. (2013). Drosophila Tracks Carbon Dioxide in Flight Current Biology DOI: 10.1016/j.cub.2012.12.038

Posted on Friday 25 January 2013 - 13:20:40 comment: 2

{TAGS}

{TAGS}

Almost every week I receive inquiries from students all over the world for PhD positions. Unfortunately, I have to decline most of them, as all the positions I have are immediately filled. Now, however, there is an opportunity for Indian students to pursue a PhD in our lab. The DAAD is offering Indian students full three-year scholarships for a German PhD (Dr. rer. nat.).

This means that we can offer all the lab and office space, equipment, consumables and other infrastructure for a three-year research project, supervised by myself, Björn Brembs at the University of Regensburg, while the salary of the candidate is subject to the application process of the DAAD.

Therefore, I invite any Indian student interested in neurobiology and about to obtain their Master's degree (i.e., this year) to contact me. The email should be sent to bjoern@brembs.net on or before March 31, 2013 and contain a full CV, including courses taken and their respective grades, as well as a one-page research proposal, referencing one or more of our recent (2008-present) publications as a single PDF document. In April 2013, I will select one to three candidates from the pool of applicants to develop their research proposal into a full-fledged DAAD PhD scholarship application, to submit by October 1, 2013.

This invitation can also be downloaded in PDF format for further distribuition.

This means that we can offer all the lab and office space, equipment, consumables and other infrastructure for a three-year research project, supervised by myself, Björn Brembs at the University of Regensburg, while the salary of the candidate is subject to the application process of the DAAD.

Therefore, I invite any Indian student interested in neurobiology and about to obtain their Master's degree (i.e., this year) to contact me. The email should be sent to bjoern@brembs.net on or before March 31, 2013 and contain a full CV, including courses taken and their respective grades, as well as a one-page research proposal, referencing one or more of our recent (2008-present) publications as a single PDF document. In April 2013, I will select one to three candidates from the pool of applicants to develop their research proposal into a full-fledged DAAD PhD scholarship application, to submit by October 1, 2013.

This invitation can also be downloaded in PDF format for further distribuition.

Posted on Wednesday 23 January 2013 - 17:55:58 comment: 0

{TAGS}

{TAGS}

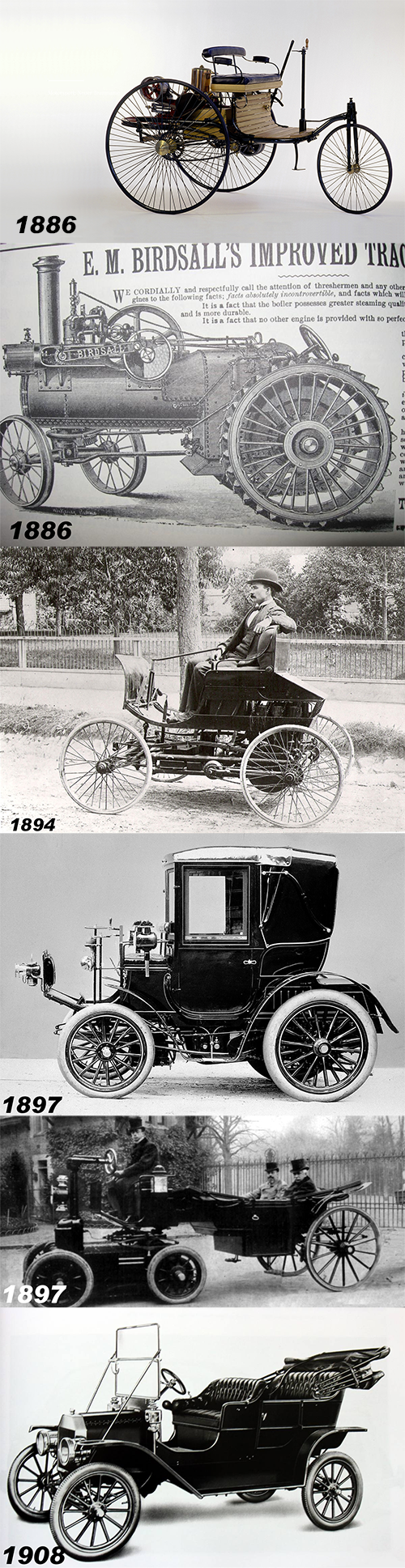

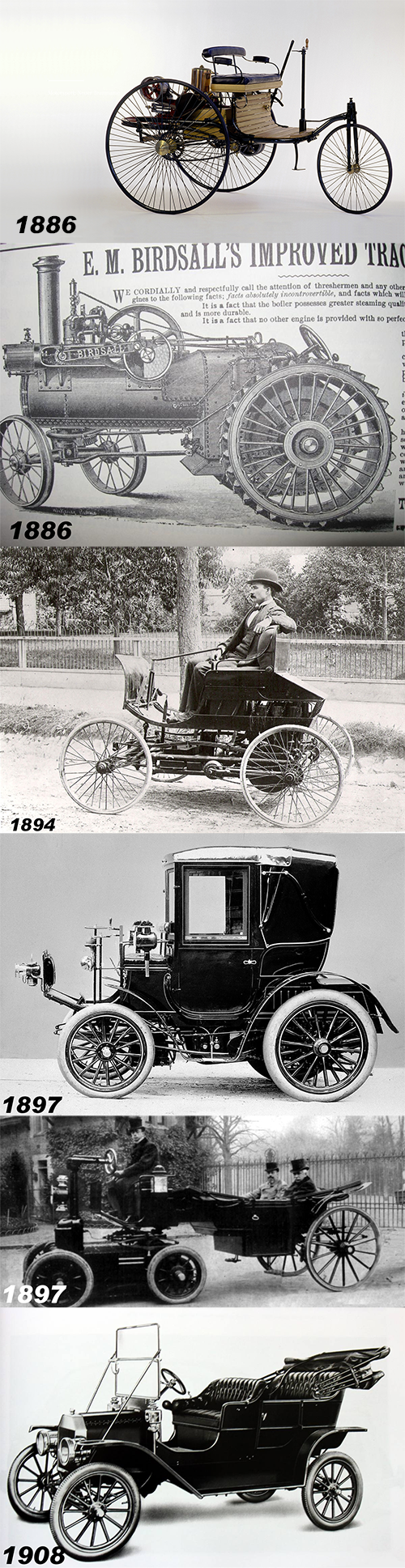

As a kid in school, I once saw a diagram of the succession of horse-drawn carriages, early car-carriages and then cars. I wasn't able to find a good illustration, so I picked some examples I found and made my own sequence:

As a kid, with 20/20 hindsight, I wondered: what was so difficult in making a car look like a car and not like a horse carriage where the horse was missing? While looking for the diagram today, I even found a steam traction engine from the same year Benz built his first car, 1886, which looked much more like a car (with, e.g., steering wheel and all) than any of the actual 'cars' until Ford's Model T only 22 years later. The concept of a steering wheel was not novel, even the very carriage-looking electric car from 1897 (under the Benz Coupé from the same year) appears to have one. And yet, most cars looked like carriages without horses for another eleven years, when all of a sudden, Ford built something that actually looked like a car for the first time.

Why, despite technological role models from other areas, were people trying to make cars look like the familiar carriage for twenty years? Given the success of the Model T, one would assume it wasn't the skepticism of the buyer. It probably wasn't any technological problem, as the steam engine shows. Or was the market just not ripe enough until 1908? Not being a historian, I'm not sure how trivial it is to answer these questions, but the sequence shows that humans tend to hang on to dysfunctional design and technology even in the presence of clearly superior technologies. What is it that makes us chose the inferior traditional over the superior novel, at least for some transition period? How can we spot the superior technology and use it right away, avoiding the mistakes of the past?

I ask myself these questions whenever I observe how corporate publishers along with too many of my colleagues, discuss the future of scholarly publishing. The scientific community in general is still attached to the carriage model and hesitant to embrace the Model T. We now have modern technology allowing us to build the scholarly communication equivalent of the Model T, and yet, much of the discussion is still focused around which horse to use, only sometimes the potentiality of actually getting rid of the horse: people wonder what one should use instead of impact factors, how publishing data is a major problem, if the taxpayer should have access to the research they funded, if scholarly societies should still use horses to make money, which paper version should be linked to on PubMed, or what the article of the future should look like and many other silly things like that. None of these issues would even exist, if we dropped all historical baggage and designed a scholarly communication system for the 21st century.

Many people may think Ford was a genius and they may be correct, but to care more about technology than tradition doesn't require genius. Come on people, let's not waste twenty years on horseless carriages, only to find out we could have built a Model T today! Drop the carriage and go for the car!

As a kid, with 20/20 hindsight, I wondered: what was so difficult in making a car look like a car and not like a horse carriage where the horse was missing? While looking for the diagram today, I even found a steam traction engine from the same year Benz built his first car, 1886, which looked much more like a car (with, e.g., steering wheel and all) than any of the actual 'cars' until Ford's Model T only 22 years later. The concept of a steering wheel was not novel, even the very carriage-looking electric car from 1897 (under the Benz Coupé from the same year) appears to have one. And yet, most cars looked like carriages without horses for another eleven years, when all of a sudden, Ford built something that actually looked like a car for the first time.

Why, despite technological role models from other areas, were people trying to make cars look like the familiar carriage for twenty years? Given the success of the Model T, one would assume it wasn't the skepticism of the buyer. It probably wasn't any technological problem, as the steam engine shows. Or was the market just not ripe enough until 1908? Not being a historian, I'm not sure how trivial it is to answer these questions, but the sequence shows that humans tend to hang on to dysfunctional design and technology even in the presence of clearly superior technologies. What is it that makes us chose the inferior traditional over the superior novel, at least for some transition period? How can we spot the superior technology and use it right away, avoiding the mistakes of the past?

I ask myself these questions whenever I observe how corporate publishers along with too many of my colleagues, discuss the future of scholarly publishing. The scientific community in general is still attached to the carriage model and hesitant to embrace the Model T. We now have modern technology allowing us to build the scholarly communication equivalent of the Model T, and yet, much of the discussion is still focused around which horse to use, only sometimes the potentiality of actually getting rid of the horse: people wonder what one should use instead of impact factors, how publishing data is a major problem, if the taxpayer should have access to the research they funded, if scholarly societies should still use horses to make money, which paper version should be linked to on PubMed, or what the article of the future should look like and many other silly things like that. None of these issues would even exist, if we dropped all historical baggage and designed a scholarly communication system for the 21st century.

Many people may think Ford was a genius and they may be correct, but to care more about technology than tradition doesn't require genius. Come on people, let's not waste twenty years on horseless carriages, only to find out we could have built a Model T today! Drop the carriage and go for the car!

Posted on Tuesday 22 January 2013 - 17:18:46 comment: 5

{TAGS}

{TAGS}

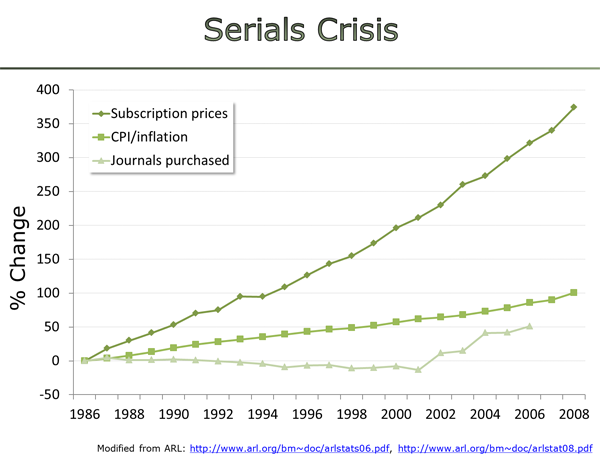

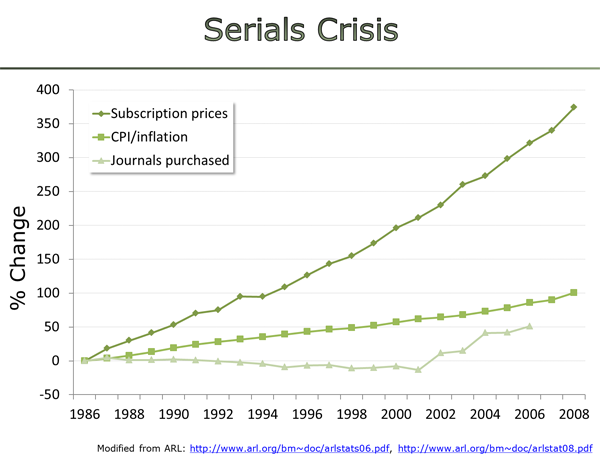

When I describe the behavior of corporate publishers with regard to scholarly communication and tight library budgets, I usually illustrate my point with the following graph:

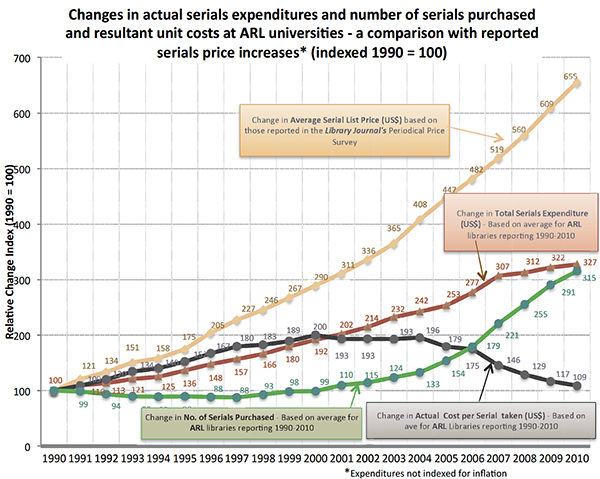

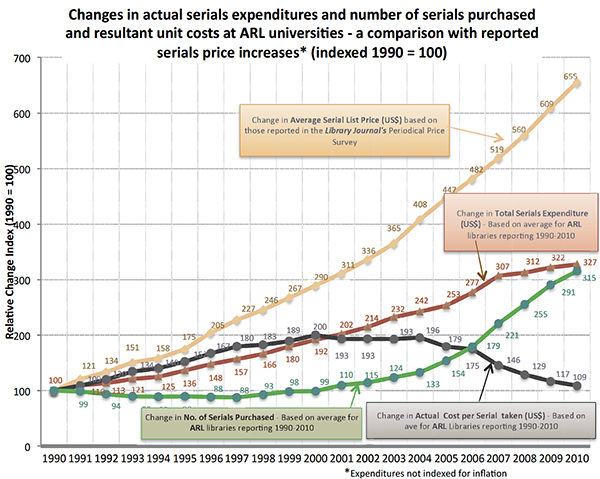

Clearly, subscription costs have increased exorbitantly over the last few decades. In recent years, however, publishers have - in a seemingly glaring contradiction to the available figures - started to claim that instead of increasing costs, they have actually decreased prices. These claims are based on an article by publishing consultant Pauly Gantz, which claims that library expenditures need to be divided by the contant their money buys. Gantz cites increasing rates of publications, increasing number of scientists and increasing numbers of journals as justifications for the hyperinflating costs and provides her readers with the following graph (click for larger version):

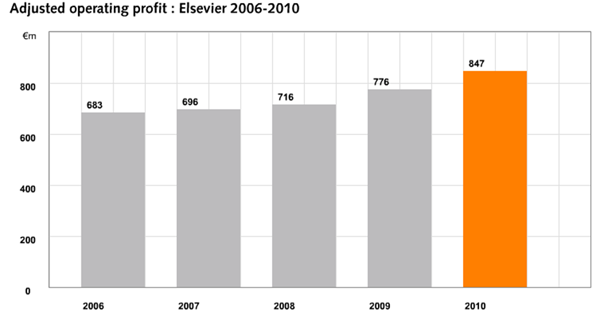

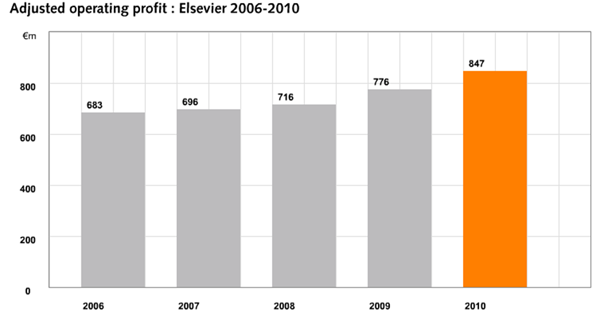

If you look at the black line, you'll notice that the actual cost per journal has decreased since about 2005. On the face of it, this seems to invalidate the argument of libraries, that corporate publishers are overcharging them. There are several points that need some clarification, though. For instance, given the negotiation practices of bundling journals together at a discount, would libraries subscriobe to that many journals if they had a choice? In other words, is the increase in journal acquisitions starting around 2000 just an arm-twisting measure of publishers to mask further price increases? Is the proliferation of journals largely a publishers' trick to milk yet more money from libraries or are publishers just jumping on an already rolling bandwagon? The graph above is pretty conspicuous, not only because it comes from the organizations which profit from libraries, but also becasue it is counter-intuitive: corporate publishers have not only been posting steadily increasing profits with no sign of any international crises anywhere (e.g. Elsevier):

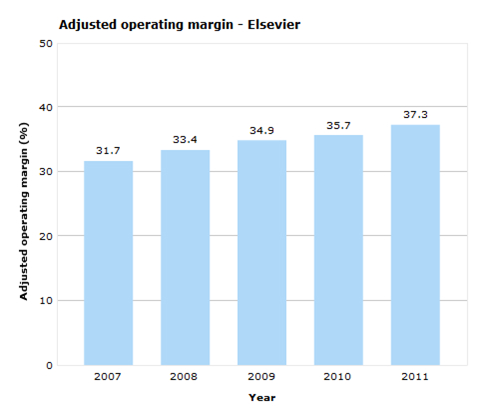

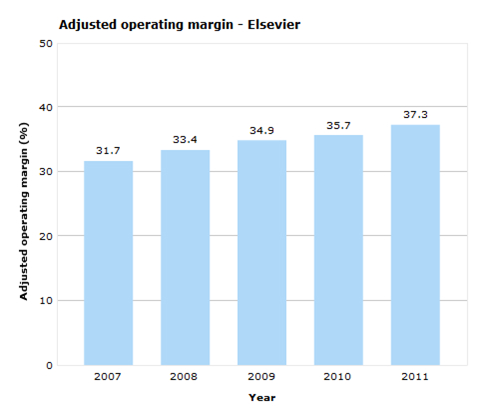

they have also been posting increasing profit margins, i.e., they have been able to reap a larger percentage of their revenues as profits:

This entails that publishers - if Elsevier is anything to go by - have been able to sell their journals at a lower cost to libraries while simultaneously not only increasing profits, but also profit margins. Obviously, this is not an impossible feat, but given the size of the margins (which conforms well with the overall approx. margin of 40% in the industry) this seems rather unlikely and requires some corroborating data from independent sources.

These data have of course raised not only my suspicion. Karen Harkerhas done some calculations and also finds a range of questions that are left to be answered:

Walt Crawford has done some "quick-and-dirty" calculations on library expenditures for two years of change over essentially all the academic libraries in the U.S.–as reported in the NCES biennial survey. He found that electronic serials expenditures rose by almost a quarter in the two years between 2008 and 2010. There are no figures on the number of journals these expenditures provide access to, but from the figures it is pretty clear by how much these numbers must have risen, in order to lead to an overall price decrease per journal.

The only thing that seems unambiguous between all involved parties at this point is that the costs of scholarly communication overall are skyrocketing at hyperinflation speeds, despite the scientific community only increasing at around 3% annually, slightly above average inflation rates. What is under dispute is whether the money is well spent.

Also here one can do some back-of-the envelope calculations, under the assumption that libraries would try to take over the tasks that publishers currently perform: there are an estimated 50 million published papers. If each of them would require an average of 1MB (in a simple PDF format, for example) of storage space, we would need a measly 50 terabytes of space. At a going rate of about 0.04€ per gigabyte, just the storage would require on the order of 2000€, plus, of course some bandwidth requirements. With a worst case scenario of about 0.1€ per gigabyte in bandwidth costs every download of the entire archive would cost around 5000€. Given an estimate of around 1.5 billion downloaded papers per year, total costs would be around 150k€ per year. This cost is also negligible, particularly as, at least in Europe, bandwidth is covered by the institutional network infrastructure, which, in Germany, is covered by federal and state budgets already. But even if these costs would not be covered, they would spread accross the about 9000 university libraries, making them a miniscule average 17€ per library and year. Thus, pure hardware and bandwidth costs are entirely negligible, on the grand scheme of things and if we omit software and data archiving.

There remain software and personnel costs. Given that most of the work on scholarly papers is done by scientists whose salary is already paid, only the technical personnel for mantaining, developing and improving the scholarly communication system can be factored in. Let's say that each library requires on average a staff of 5 full-time employees with a salary of 100k just for journal-related tasks (i.e., a work-force of 45k people working just on scholarly communication). This would bring personnel costs up to about 4.5b world-wide per year. Much of the software for hosting scholarly journals is Open Source or can be acquired at reasonable cost. Let's make the quite unlikely assumption that each library would have to spend about 50k per year for journal-related software, to keep everything running. That would add another ~0.5b to a total running cost of around 5b per year. Given conservative estimates of around 10b in annual revenues for the scholarly publishing economy, this means libraries are being overcharged by at least about 5b every year, if not more. Given the 40% profit margins of publishers, this actually works out quite well: corporate CEOs and their shareholders pocket about 5b worth of taxpayer money each year via library subscriptions.

I say it's time we spend these 5b per year in a more meaningful way.

Clearly, subscription costs have increased exorbitantly over the last few decades. In recent years, however, publishers have - in a seemingly glaring contradiction to the available figures - started to claim that instead of increasing costs, they have actually decreased prices. These claims are based on an article by publishing consultant Pauly Gantz, which claims that library expenditures need to be divided by the contant their money buys. Gantz cites increasing rates of publications, increasing number of scientists and increasing numbers of journals as justifications for the hyperinflating costs and provides her readers with the following graph (click for larger version):

If you look at the black line, you'll notice that the actual cost per journal has decreased since about 2005. On the face of it, this seems to invalidate the argument of libraries, that corporate publishers are overcharging them. There are several points that need some clarification, though. For instance, given the negotiation practices of bundling journals together at a discount, would libraries subscriobe to that many journals if they had a choice? In other words, is the increase in journal acquisitions starting around 2000 just an arm-twisting measure of publishers to mask further price increases? Is the proliferation of journals largely a publishers' trick to milk yet more money from libraries or are publishers just jumping on an already rolling bandwagon? The graph above is pretty conspicuous, not only because it comes from the organizations which profit from libraries, but also becasue it is counter-intuitive: corporate publishers have not only been posting steadily increasing profits with no sign of any international crises anywhere (e.g. Elsevier):

they have also been posting increasing profit margins, i.e., they have been able to reap a larger percentage of their revenues as profits:

This entails that publishers - if Elsevier is anything to go by - have been able to sell their journals at a lower cost to libraries while simultaneously not only increasing profits, but also profit margins. Obviously, this is not an impossible feat, but given the size of the margins (which conforms well with the overall approx. margin of 40% in the industry) this seems rather unlikely and requires some corroborating data from independent sources.

These data have of course raised not only my suspicion. Karen Harkerhas done some calculations and also finds a range of questions that are left to be answered:

- What is the best way to measure the costs of journals? Per subscription? Per article? Per usage?

- Where can we get this data?

- How do library expenditures compare with listed prices?

- How do we measure costs per title of journal packages?

- What has been the trends in content growth per subscription?

- What is the usage of the supplemental data? How many journals provide it? How many require a subscription to access it?

- Are we buying too much? Is the growth in content truly worth our money?

- What is the extent of content that is provided with each subscription? How far back are the archives with the subscription? How much are the archives used?

- What are the true costs of maintaining ejournals?

The only thing that seems unambiguous between all involved parties at this point is that the costs of scholarly communication overall are skyrocketing at hyperinflation speeds, despite the scientific community only increasing at around 3% annually, slightly above average inflation rates. What is under dispute is whether the money is well spent.

Also here one can do some back-of-the envelope calculations, under the assumption that libraries would try to take over the tasks that publishers currently perform: there are an estimated 50 million published papers. If each of them would require an average of 1MB (in a simple PDF format, for example) of storage space, we would need a measly 50 terabytes of space. At a going rate of about 0.04€ per gigabyte, just the storage would require on the order of 2000€, plus, of course some bandwidth requirements. With a worst case scenario of about 0.1€ per gigabyte in bandwidth costs every download of the entire archive would cost around 5000€. Given an estimate of around 1.5 billion downloaded papers per year, total costs would be around 150k€ per year. This cost is also negligible, particularly as, at least in Europe, bandwidth is covered by the institutional network infrastructure, which, in Germany, is covered by federal and state budgets already. But even if these costs would not be covered, they would spread accross the about 9000 university libraries, making them a miniscule average 17€ per library and year. Thus, pure hardware and bandwidth costs are entirely negligible, on the grand scheme of things and if we omit software and data archiving.

There remain software and personnel costs. Given that most of the work on scholarly papers is done by scientists whose salary is already paid, only the technical personnel for mantaining, developing and improving the scholarly communication system can be factored in. Let's say that each library requires on average a staff of 5 full-time employees with a salary of 100k just for journal-related tasks (i.e., a work-force of 45k people working just on scholarly communication). This would bring personnel costs up to about 4.5b world-wide per year. Much of the software for hosting scholarly journals is Open Source or can be acquired at reasonable cost. Let's make the quite unlikely assumption that each library would have to spend about 50k per year for journal-related software, to keep everything running. That would add another ~0.5b to a total running cost of around 5b per year. Given conservative estimates of around 10b in annual revenues for the scholarly publishing economy, this means libraries are being overcharged by at least about 5b every year, if not more. Given the 40% profit margins of publishers, this actually works out quite well: corporate CEOs and their shareholders pocket about 5b worth of taxpayer money each year via library subscriptions.

I say it's time we spend these 5b per year in a more meaningful way.

Posted on Thursday 17 January 2013 - 15:00:07 comment: 2

{TAGS}

{TAGS}

Besides all the raging enthusiasm and world-wide adoption of open access principles there is also one or the other skeptical voice out there (to put it mildly). One voice that has been growing louder the last few years have been scholarly societies. The reason they become troubled is that many of these learned societies derive a large proportion of their revenue from subscription-based journal publishing: many libraries subscribe to these journals, allowing the money from many non-members (funding the libraries) to pay for the activities of the members of these societies. A recent article in the Times Higher Education quotes Dame Finch (from the Finch report):

With regards to the societies' resulting revenue stream, however, a few things can be said. For one, one can question the practice of charging non-members for member-activities without their consent. Moreover, open access does not necessarily entail a loss of revenue. Some open access publishers are indeed profitable. Perhaps more importantly, however, is the question how much the concerns of learned societies really are to be taken all that seriously in the long term: would such concerns be taken equally seriously if these societies were deriving revenue from the Pony Express or the telegraph or some other outdated technology, instead of subscription publishing? The world is moving on and so will learned societies. After all, their members are scientists - they'll find a solution to their problem.

See also: part I: illusions, part II: visions.

"Different learned societies will take different views of where their interests lie and whether it is appropriate to modify their [journals'] business models. For the foreseeable future, they could decide to remain subscription journals,"

Apparently, some society members of her committee have voiced concerns about traditional revenue streams. These concerns are surely well-founded: if funders require open access publications, who will want to read journals which do not contain any publicly funded research? Clearly, societies will have to embrace open access publishing if the strive to stay in the publishing business.With regards to the societies' resulting revenue stream, however, a few things can be said. For one, one can question the practice of charging non-members for member-activities without their consent. Moreover, open access does not necessarily entail a loss of revenue. Some open access publishers are indeed profitable. Perhaps more importantly, however, is the question how much the concerns of learned societies really are to be taken all that seriously in the long term: would such concerns be taken equally seriously if these societies were deriving revenue from the Pony Express or the telegraph or some other outdated technology, instead of subscription publishing? The world is moving on and so will learned societies. After all, their members are scientists - they'll find a solution to their problem.

See also: part I: illusions, part II: visions.

Posted on Thursday 17 January 2013 - 11:02:24 comment: 0

{TAGS}

{TAGS}

In our article, we describe our vision of libraries taking over the archiving and making accessible of scholarly articles, as I have suggested here numerous times. However, this vision does not immediately address the problem of accessing back-issue archives which largely still remain in the hands of toll-access corporate publishers. Micah Allen is suggesting to exercise a little bit of civil disobedience and use a mash-up of online technologies to liberate scholarly articles in a crowdsourcing effort. In two separate posts he first outlines the idea and then provides detailed technical instructions as to how to go about making research accessible à la Aaaron Swartz. He calls it the #papester initiative (think Napster).

The general idea is to use the Twitter hashtag #icanhazpdf to locate in-demand articles behind paywalls, then those with access to the articles deposit the PDF versions in a publicly accessible folder, maybe on DropBox simply using DropBox Linker. One could also try and deposit all the PDF files in torrents, using magnet links to locate them. These URNs could be used to aggregate any comments, links, citations and other usage data to arrive at a whole suite of post-publication impact-data. In fact, this sort of distributed storage and archiving system is extremely robust, sustainable and the use of URNs allows for permanent identifiers which are at least as reliable (if not more so) than DOIs. Which is precisely why I find the combination of torrent technology and magnet links ideal for the technical implementation of a lbrary-based scholarly communication system.

Head on over to Micah's blog to read more about all the juicy and delicious #papester details.

See also: part I: illusions, part III: concerns.

The general idea is to use the Twitter hashtag #icanhazpdf to locate in-demand articles behind paywalls, then those with access to the articles deposit the PDF versions in a publicly accessible folder, maybe on DropBox simply using DropBox Linker. One could also try and deposit all the PDF files in torrents, using magnet links to locate them. These URNs could be used to aggregate any comments, links, citations and other usage data to arrive at a whole suite of post-publication impact-data. In fact, this sort of distributed storage and archiving system is extremely robust, sustainable and the use of URNs allows for permanent identifiers which are at least as reliable (if not more so) than DOIs. Which is precisely why I find the combination of torrent technology and magnet links ideal for the technical implementation of a lbrary-based scholarly communication system.

Head on over to Micah's blog to read more about all the juicy and delicious #papester details.

See also: part I: illusions, part III: concerns.

Posted on Thursday 17 January 2013 - 10:45:39 comment: 0

{TAGS}

{TAGS}

Open Access is really taking off these days, at least if measured by the number of people chiming in with opinions, visions and concerns. Today saw not only the deposition of our paper on how journal rank is like homeopathy or astrology on ArXiv, but Mike Taylor also cites Michael Eisen in an aptly titled article "Hiding your research behind a paywall is immoral" as noting that "fewer than half of biology hires at Berkeley in the last decade have published in Science, Nature or Cell". Although there is still no citable evidence to that effect I know of, it underscores the empirical data we do review in our article: it is irrelevant where scientific discoveries are published. Journal rank is a figment of our imagination and does not stand up to scientific scrutiny.

Just making journal articles open access will only cure one symptom of the disease that is crippling science today. We need to rid ourselves of the illusion of journal rank and instead rely on scientific methods to perform the sort. filter, evaluation and discover tasks we currently bestow on journal rank. Dan Cohen on Wired echoes that sentiment when he demands in Wired that "To Make Open Access Work, We Need to Do More Than Liberate Journal Articles". His article emphasizes (as we do in ours) that the important development that now needs to happen is to change the incentives for scientists, to align them better with what is good for science. He emphasizes the need to replace journal rank with post-publication review, focusing on the article, i.e. the scientific discovery, rather than on the container, i.e., the journal.

See also: part II: visions, part III: concerns.

Just making journal articles open access will only cure one symptom of the disease that is crippling science today. We need to rid ourselves of the illusion of journal rank and instead rely on scientific methods to perform the sort. filter, evaluation and discover tasks we currently bestow on journal rank. Dan Cohen on Wired echoes that sentiment when he demands in Wired that "To Make Open Access Work, We Need to Do More Than Liberate Journal Articles". His article emphasizes (as we do in ours) that the important development that now needs to happen is to change the incentives for scientists, to align them better with what is good for science. He emphasizes the need to replace journal rank with post-publication review, focusing on the article, i.e. the scientific discovery, rather than on the container, i.e., the journal.

See also: part II: visions, part III: concerns.

Posted on Thursday 17 January 2013 - 10:19:03 comment: 7

{TAGS}

{TAGS}

Render time: 0.3068 sec, 0.0111 of that for queries.