Main Menu

Welcome

Thomson Reuters' Impact Factor (IF) is supposed to provide a measure for how often the average publication in a scientific journal is cited and thus a quantitative basis for ranking journals. However, there are (at least) three major problems with the IF:

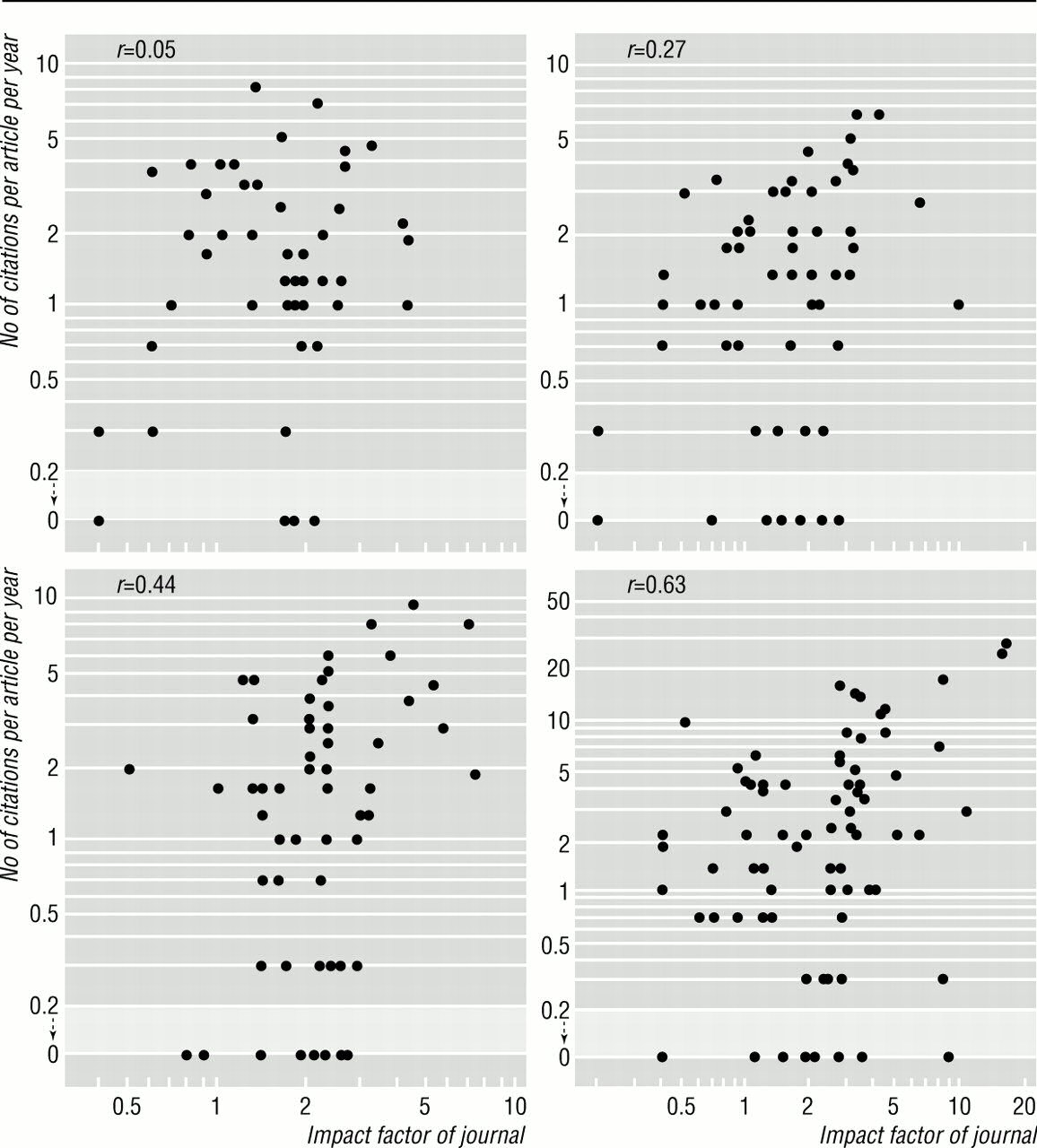

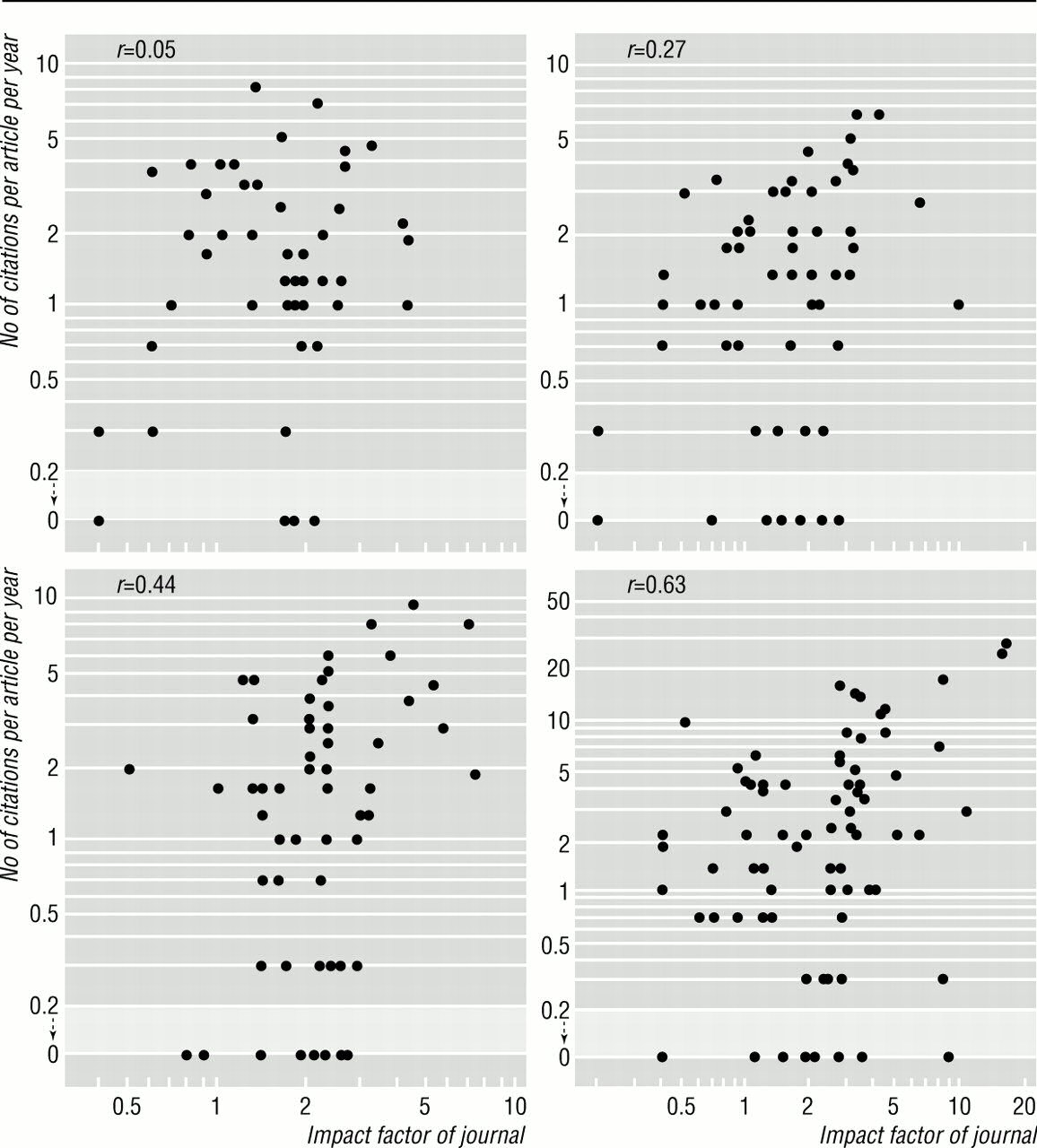

Fig. 1: Four examples of publications from individual researchers. Plotted are the actual citations of the publications against the Impact Factor of the journals they were published in (image source.)

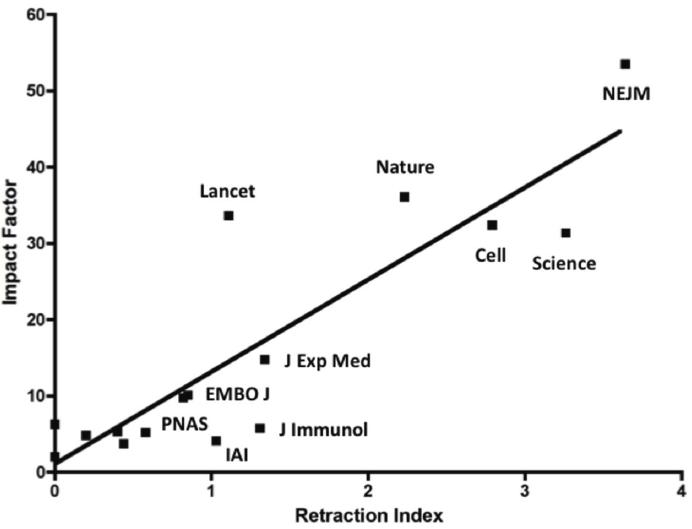

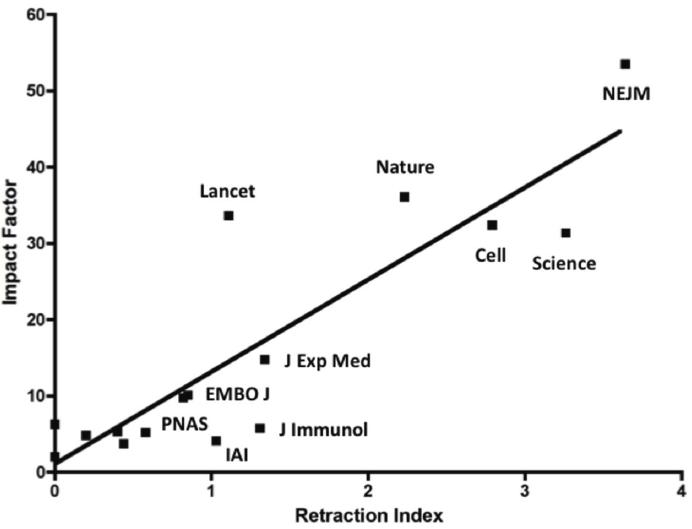

Compare these correlations to the recently published correlation between retractions and Impact Factor (in Infection and Immunity, Infect. Immun. doi:10.1128/IAI.05661-11):

Now, one would need to do some thorough quantification and testing of this, but at a first glance, it appears pretty obvious to me that Retractions are a much better predictor for Impact Factor than citations. Can anyone do such a test of this hypothesis?

Fang, F., & Casadevall, A. (2011). RETRACTED SCIENCE AND THE RETRACTION INDEX Infection and Immunity DOI: 10.1128/IAI.05661-11

Seglen PO (1997). Why the impact factor of journals should not be used for evaluating research. BMJ (Clinical research ed.), 314 (7079), 498-502 PMID: 9056804

- The IF is negotiable and doesn't reflect actual citation counts (source)

- The IF cannot be reproduced, even if it reflected actual citations (source)

- The IF is not statistically sound, even if it were reproducible and reflected actual citations (source)

Fig. 1: Four examples of publications from individual researchers. Plotted are the actual citations of the publications against the Impact Factor of the journals they were published in (image source.)

Compare these correlations to the recently published correlation between retractions and Impact Factor (in Infection and Immunity, Infect. Immun. doi:10.1128/IAI.05661-11):

Now, one would need to do some thorough quantification and testing of this, but at a first glance, it appears pretty obvious to me that Retractions are a much better predictor for Impact Factor than citations. Can anyone do such a test of this hypothesis?

Fang, F., & Casadevall, A. (2011). RETRACTED SCIENCE AND THE RETRACTION INDEX Infection and Immunity DOI: 10.1128/IAI.05661-11

Seglen PO (1997). Why the impact factor of journals should not be used for evaluating research. BMJ (Clinical research ed.), 314 (7079), 498-502 PMID: 9056804

Posted on Thursday 18 August 2011 - 09:59:22 comment: 0

{TAGS}

{TAGS}

You must be logged in to make comments on this site - please log in, or if you are not registered click here to signup

Render time: 0.0727 sec, 0.0053 of that for queries.